.NET - Performance comparison with Parallel Extensions .NET 3.5

- Date:

- Author: Stefan Cruysberghs

Some days ago Microsoft released a Community Tech Preview of the Parallel Extensions for .NET 3.5. Parallel Extensions is a managed programming model which makes it easier for developers to take advantage of multiple cores and processors. The first demo of this technology I saw was on the Microsoft Developer Days in Ghent in March 2007. I was quite impressed because with almost no effort the performance of some tasks can be increased drastically.

The Parallel Extensions contain 2 parts; Parallel Language Integrated Query (PLINQ) and the Task Parallel Library (TPL). PLINQ implements some parallel execution techniques underneath the LINQ querying model. TPL is focused on data and task parallelism expressed in a more imperative style of programming, suitable for cases where problems cannot be expressed as queries.

My notebook has a Intel Dual Core T7200 2Ghz processor so I was curious about the performance gains on my computer.

The CTP of Parallel Extensions includes 5 demo applications (LINQ Ray Tracer, PLINQExamples, Raytracer, Sudoku, TaskParallelLibraryExamples) which can be found in the C:\Program Files\Microsoft Parallel Extensions Dec07 CTP folder.

I have modified the PLINQExamples and TaskParallelLibraryExamples projects a little bit so that all durations (stopwatch elapsed time in milliseconds) are outputted as semicolon separated CSV files. I executed both demo applications 10 times and imported these results in Excel. Then I created some pivot tables and charts.

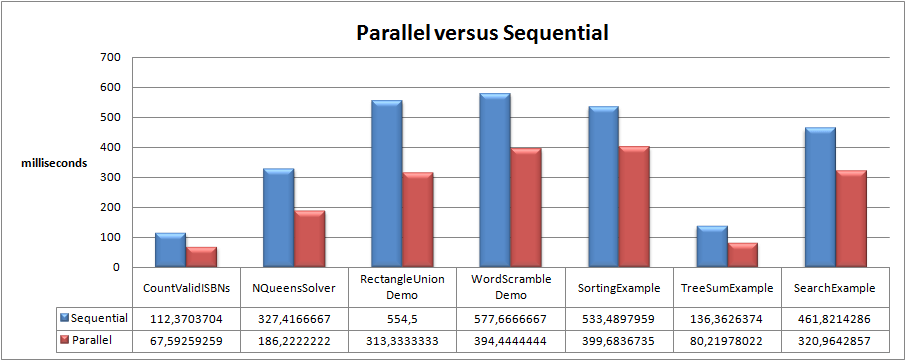

Increase of performance

This is a pivot chart with averages of all tests. As you can see, parallel execution is faster for almost all provided examples.

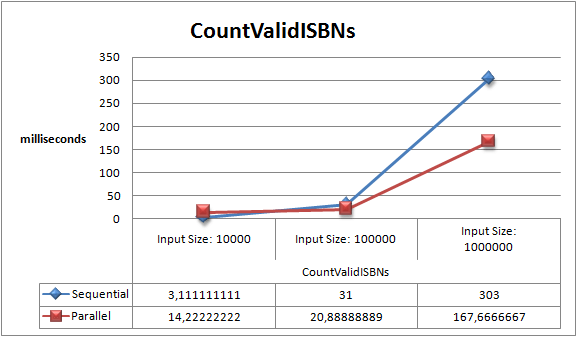

I also created some charts with extra details. The CountValidISBN example uses PLINQ to count valid ISBN numbers in an input data source. As you can see, you gain performance when the input size increases.

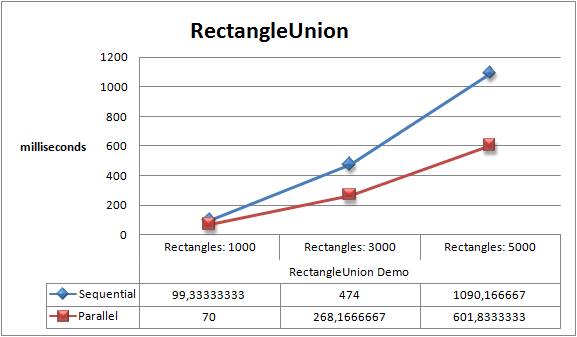

Same speedup result with the RectangleUnion example. Given N rectangles with sides parallel to x and y axes, the example uses PLINQ to compute the area of the union of all of the rectangles.

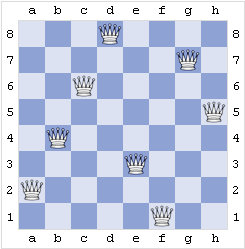

N Queen Puzzle

|

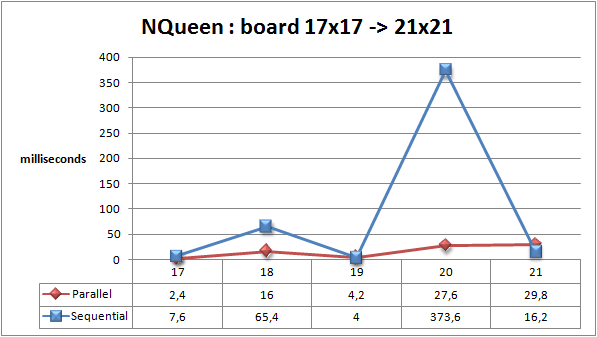

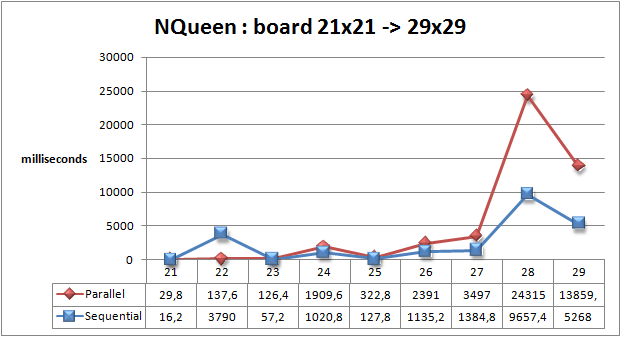

Taking a look at the results in Excel, I also noticed that there were some tests where parallel execution sometimes seems to be slower then sequential execution. Of course parallelism imposes overhead associated with partitioning data and tasks, communication/synchronization between the parallel execution entities (tasks, threads, …), and merging or aggregation at the end to produce the final result. Clearly all of this extra work can make parallel execution more expensive. I took a closer look at the TaskParallel NQueen example which is a more complex algorithm. The eight queens puzzle is the problem of putting eight chess queens on an 8×8 chessboard such that none of them is able to capture any other using the standard chess queen's moves. (http://en.wikipedia.org/wiki/Eight_queens_puzzle) This NQueen example uses the TaskParallel Library to solve the more general n queens puzzle. So I modified the NQueen example a little bit and executed it again and again. The detailed time measuring results are always the same. Parallel execution is faster when the board is smaller then 22x22 but when the board size exceeds 22, there are parallel performance slow-downs. |

Board |

17x17 |

18x18 |

19x19 |

20x20 |

21x21 |

22x22 |

23x23 |

24x24 |

25x25 |

26x26 |

27x27 |

28x28 |

29x29 |

Sequential |

8 | 65 | 4 | 374 | 16 | 3790 | 57 | 1021 | 128 | 1135 | 1385 | 9657 | 5268 |

Parallel |

2 | 16 | 4 | 28 | 30 | 138 | 126 | 1910 | 323 | 2391 | 3497 | 24315 | 13859 |

Querying Outlook

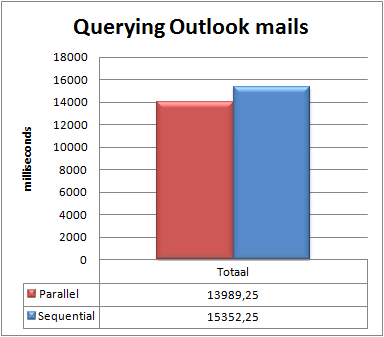

Not all programs will be immediately amenable to parallel execution. Some tasks are inherently sequential and they will not gain performance when using a parallelism approach. So finally I was trying to modify one of my own applications. Will my querying-Outlook-mails-with-LINQ-application benefit from using parallelism ?

I created two methods which will be executed 20 times. Both methods will retrieve all Outlook mails from last month. Parallel extensions will be used in the LINQ query and in the foreach loop of the second method.

using System;

using System.Collections.Generic;

using System.Linq;

using System.Text;

using System.Xml;

using System.Diagnostics;

using System.Threading;

namespace ConsoleApplicationPLINQ

{

class Program

{

static void Main(string[] args)

{

OutlookItems outlookItems = new OutlookItems();

for (int i = 0; i < 20; i++)

{

OutlookMails(outlookItems);

OutlookMailsParallel(outlookItems);

}

Console.ReadLine();

}

static void OutlookMails(OutlookItems outlookItems)

{

Stopwatch sw = Stopwatch.StartNew();

var queryMails = from mail in outlookItems.SentMailItems

where mail.SentOn > DateTime.Now.AddMonths(-1)

select mail;

foreach (var mail in queryMails)

mail.FlagStatus = Microsoft.Office.Interop.Outlook.OlFlagStatus.olFlagComplete;

Console.WriteLine("Sequential;{0}", sw.ElapsedMilliseconds);

}

static void OutlookMailsParallel(OutlookItems outlookItems)

{

Stopwatch sw = Stopwatch.StartNew();

var queryMails = from mail in outlookItems.SentMailItems.AsParallel()

where mail.SentOn > DateTime.Now.AddMonths(-1)

select mail;

Parallel.ForEach(queryMails, mail =>

{

mail.FlagStatus = Microsoft.Office.Interop.Outlook.OlFlagStatus.olFlagComplete;

});

Console.WriteLine("Parallel;{0}", sw.ElapsedMilliseconds);

}

}

}This is the result. The parallel approach makes it faster but the difference is small (13 & 15 seconds).

I was also trying what would happen if would like to update a global variable. So in the foreach loop I increased the attachmentCount variable which is declared outside the loop.

int attachmentCount = 0;

Parallel.ForEach(queryMails, mail =>

{

attachmentCount = mail.Attachments.Count;

});

Console.WriteLine("Parallel;{0};{1};", attachmentCount, sw.ElapsedMilliseconds);The sequential method always returns 42 but the parallel method returns 14, 3, 2, 7, 13, 8, ... So be careful, not every task can be parallelized !

Conclusion

I really like LINQ so I welcome the PLINQ technology enthusiastically. When you have to process large blocks of data, the PLINQ extension will surely improve the performance of your application. But, don't expect too much.

The ParallelTask library is also a great extension but it is more complex. You also have to keep in mind that not every algorithm will be executed faster when it will be spread among several cores or processors because parallelism has it costs.